Is this it?

Is this how the AI singularity finally happens?

A model that can actually improve itself -- by itself? Like even the AI researchers themselves should be worried now about losing their jobs?

MiniMax M2.7 is a self-evolving model. Let that sink in.

MiniMax M2.7 represents a fundamental shift from static training to self-evolving intelligence—an autonomous loop where the model identifies its own logic gaps and refines its own architecture, ultimately delivering frontier-class reasoning at a fraction of the cost

Look this is not about incremental intelligence improvements anymore -- this is the promise (nightmare?) of endless exponential recursive self-evolving intelligence.

You roll your eyes and yawn (same old hype right?) -- until I tenderly inform you of how MiniMax 2.7 literally handled 30-50% of its own learning research and ran 100+ iteration cycles to improve itself.

A "self-critiquing" model that can tell when it's hallucinating? That knows when it's not thinking straight?

By replacing human labeling with a recursive self-critique loop, MiniMax M2.7 became its own most rigorous auditor—systematically mapping its own logic gaps to drive the hallucination rate down to an industry-leading 34%.

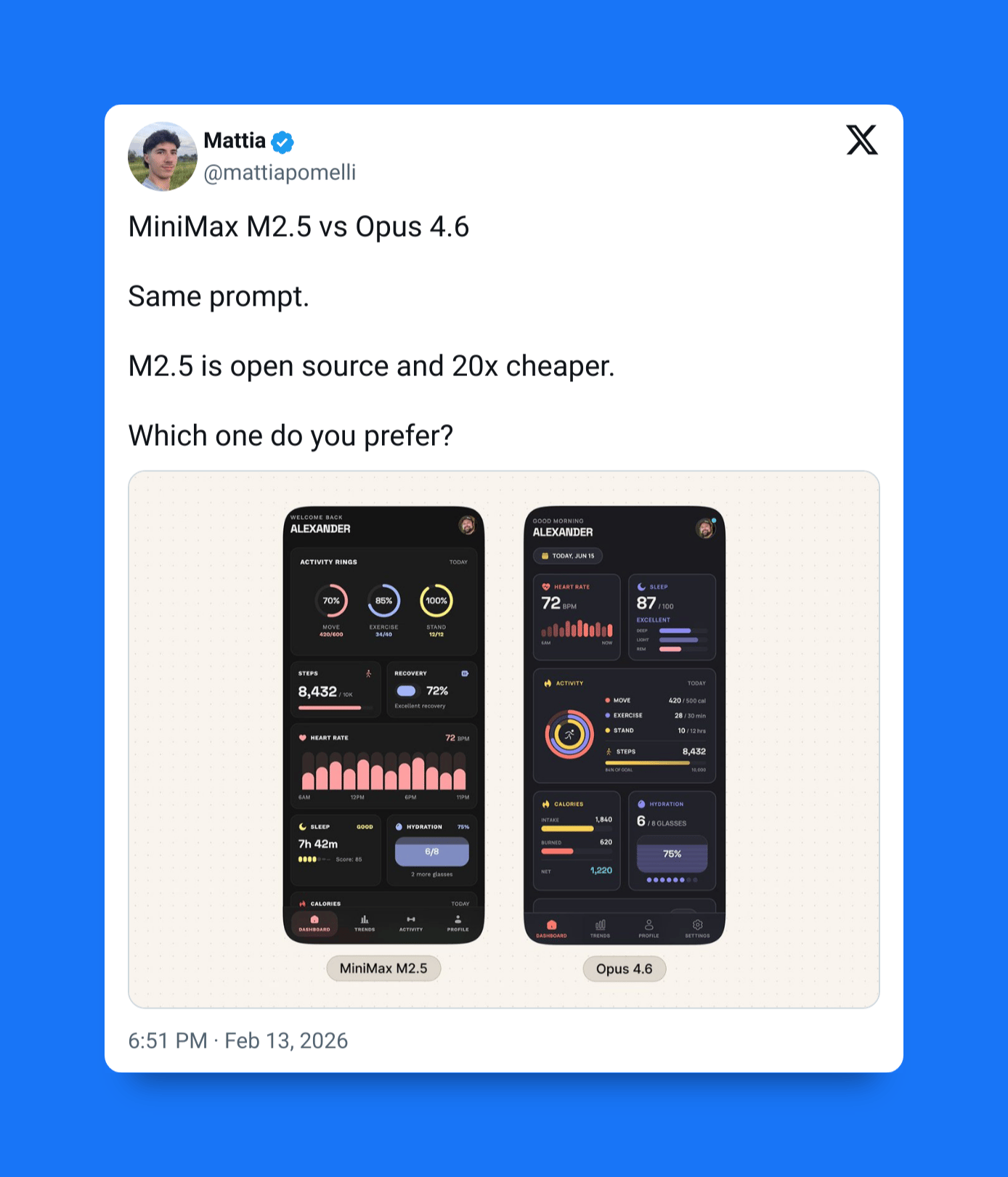

The previous model in the MiniMax M series, M2.5, going up against Claude Opus 4.6:

Compare that to the possibly debatably deserved attention-grabbers of our time:

Claude 4.6 Sonnet: 46%

Gemini 3.1 Pro: 50%

If you've ever seen something as earth-shatteringly groundbreaking as this in a new model before, just let me know, okay? Because I highly doubt I have...

Instead of: