You think 4x faster than you type. Why slow down?

Wispr Flow turns your voice into ready-to-send text inside any app. Speak naturally and Flow handles the cleanup -- stripping filler words, fixing grammar, formatting everything properly.

For developers, this means:

Dictate into Cursor, VS Code, or any IDE with full syntax accuracy

Give coding agents 10x more context by talking instead of typing

Write PRs, docs, and Linear tickets without switching to a text editor

Respond to Slack and email without breaking your flow state

Used by teams at OpenAI, Vercel, and Clay. 89% of messages sent with zero edits. Millions of users worldwide.

Available on Mac, Windows, iPhone, and now Android - free and unlimited on Android during launch.

Awesome news:

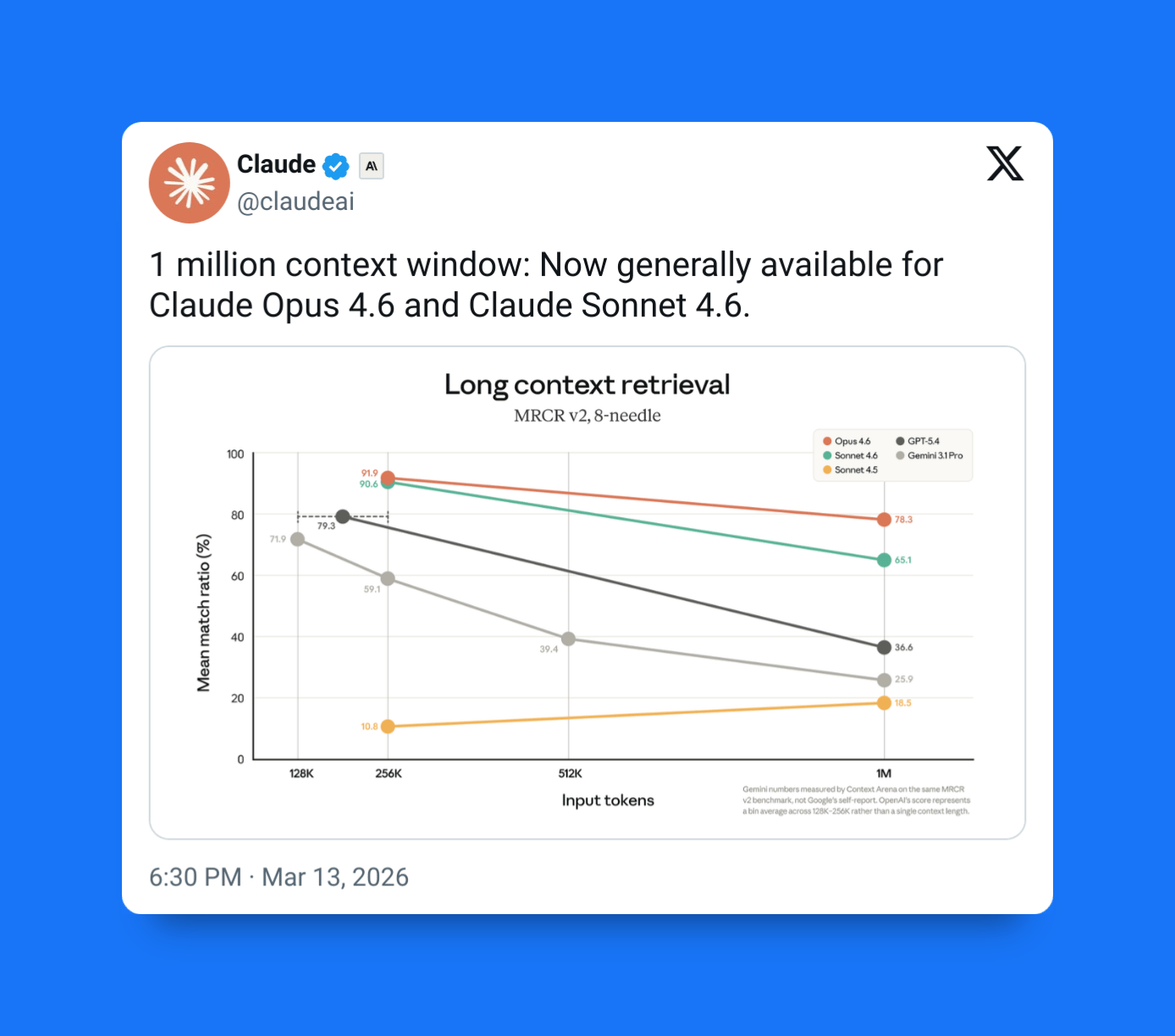

The new 1 million token context window in Claude Sonnet 4.6 and Opus 4.6 is now generally available to everyone.

And this number isn’t just a bigger number for Anthropic to brag about — it fundamentally changes how AI can assist us with real software development.

Before:

Coding with AI often meant constantly shrinking problems to fit the model’s memory with context compaction and other hacky techniques.

But now:

Entire codebases, multi-hour long debugging sessions, and intricately connected system context can all stay in view at once.

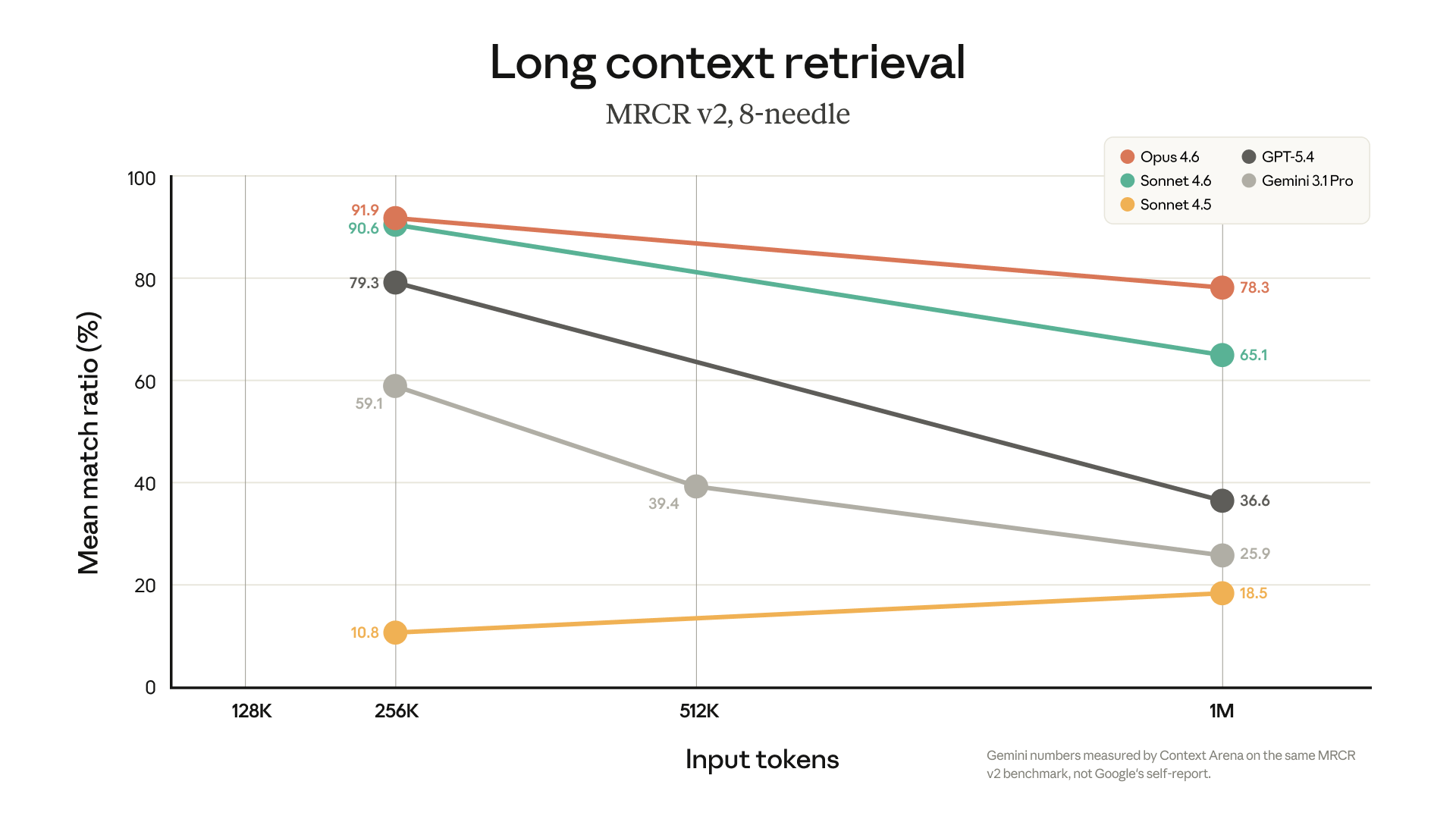

And not all 1M token context windows are equal you know... other models like GPT and Gemini do have this too -- but Claude's 1M context outshines them -- due to its superior long context retrieval -- as seen in major benchmarks.

With 1M context now the default, no extra pricing for long inputs, and major improvements in long-context retrieval, developers can finally use AI on the most enormous projects without the any of the usual limitations and accuracy losses.

Let's look at five key ways these changes translate into meaningful upgrades for all our everyday coding workflows.

1. Superior whole-codebase understanding leads to smarter fixes and refactors

Context lost -- one of the most common frustrations with AI coding tools.

Models often understand a single file well but struggle with how that file fits into the broader system -- especially when they can't fit in all the related files into the context.

With 1M tokens available, Claude can hold far more project information at once, including:

Multiple services or modules

API contracts and schemas

Tests and test expectations

Documentation and architecture notes

Logs, stack traces, and debugging context

Instead of constantly reintroducing information, developers can load much more of the codebase into context from the start.

This leads to improvements in tasks like:

Debugging issues that span multiple files

Performing architecture-aware refactors

Maintaining consistency with existing patterns

Understanding how changes affect dependent modules

Claude becomes much more like a collaborator that understands the full structure of the project -- no matter how massive it gets.

2. Pinpointing critical details hidden in massive codebases

Large context windows only help if the model can actually find the right information inside them.

Anthropic measures this with long-context retrieval tests called needle-in-a-haystack benchmarks, which test whether a model can locate a specific fact buried inside extremely large inputs.

Recent results show us just how much this capability has improved:

Opus 4.6 scored 76% on the 1M-token MRCR benchmark

Sonnet 4.5 scored around 18% on similar tests

This huge jump shows…

Here's how I use Attio to run my day.

Attio's AI handles my morning prep — surfacing insights from calls, updating records without manual entry, and answering pipeline questions in seconds. No searching, no switching tabs, no manual updates.