This will permanently transform the way so many developers interact with AI coding tools

Wow Anthropic is on fire -- now they just gave us this brilliant new voice mode feature for Claude Code -- and this is going to totally transform the way so many developers interact with AI coding tools moving forward.

Instead of carefully typing every instruction -- you can now speak your intent directly to an AI agent that understands and works inside your codebase.

[image: voice mode up and ready to go in Claude Code] View on web

From prompt-writing to near-real-time collaboration -- now communicating closer than ever to the speed of thought -- explaining problems, delegating tasks, and refining instructions naturally and intuitively.

And it's not just a generic speech recognition -- this was built specifically for coding.

With seamless activation, real-time streaming transcription, and seamless voice-plus-keyboard input, Claude Code is going to start feeling less like a chatbot and more like a peer-to-peer coding partner.

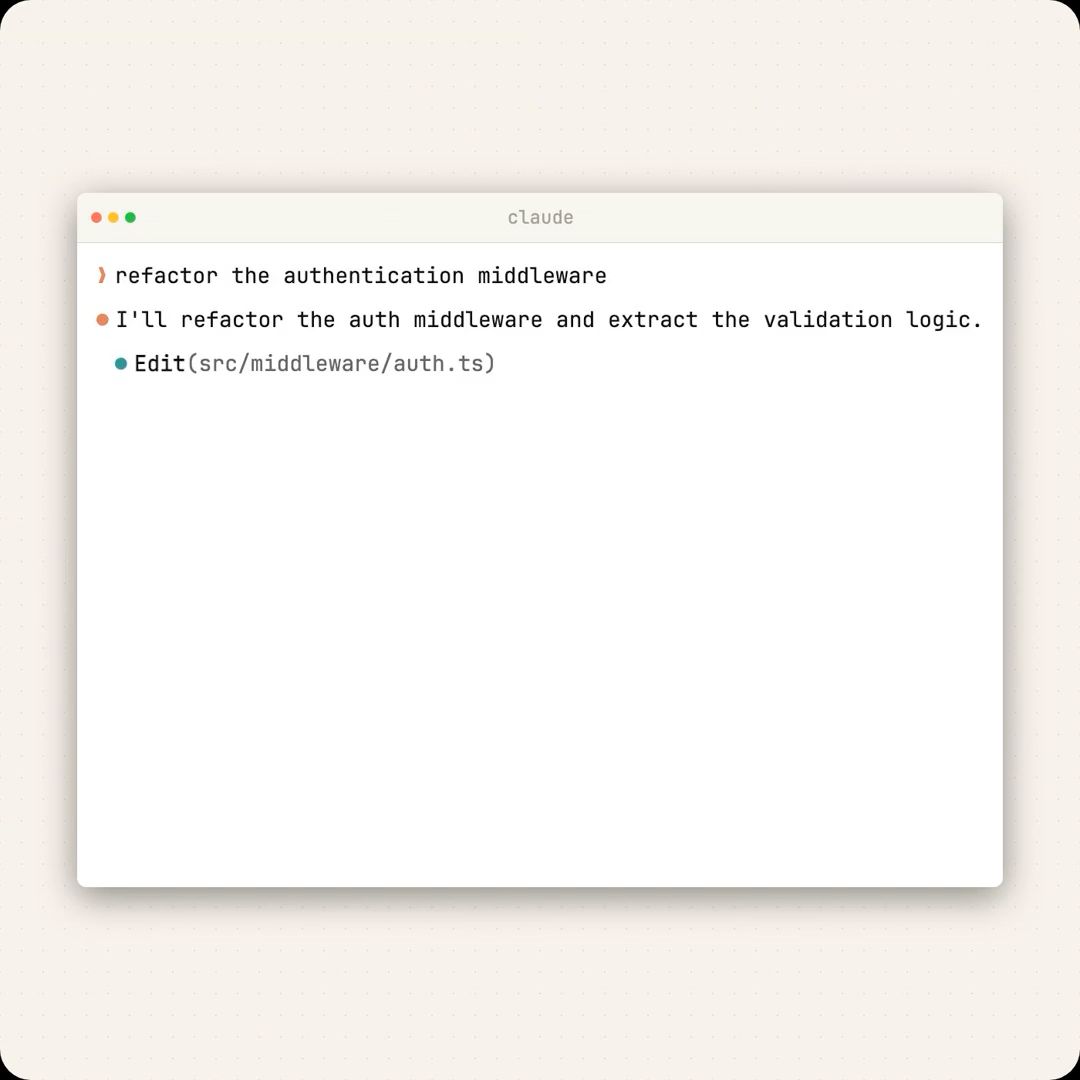

[demo: Claude Code voice mode in action] View on web

Enable it with 1 command

The setup is intentionally lightweight.

You just type

/voiceto enable voice mode.No external dictation tool or additional setup is required.

Voice becomes simply another input layer inside the existing workflow.

Fine-tuned for coding, not generic conversation

Claude Code voice mode isn’t just speech added to a chatbot.

The key point is that the transcription itself is optimized for coding workflows, not everyday conversation. That means it’s tuned to handle the kinds of things developers actually say when working:

syntax-heavy phrases

function and class names

file paths and CLI commands

library names and technical terminology

So that means we can say things like:

“Open

auth-middleware.tsand trace where the token validation fails.”“Refactor the

UserServiceclass to use dependency injection.”“Run the test suite and show me the failing cases.”

And Claude Code can reliably capture and act on those instructions.

Voice becomes a way to direct a coding agent, not just chat with one.

Zero-cost transcription lowers the barrier

This is one of the biggest selling points:

Voice transcription tokens are free.

This removes a major adoption barrier.

Benefits include:

No need to worry about usage costs while speaking

Easier to use voice for rough or exploratory prompts

Encourages natural thinking out loud during development

If transcription were metered, people would hesitate to use it casually. Removing that friction makes voice a default option when it’s faster.

Real-time streaming is what makes it usable

The feature supports real-time streaming transcription.

This means:

Your speech appears in the prompt as you talk

Voice and keyboard input work together

You can seamlessly switch between speaking and typing

Example hybrid flow:

Speak the high-level task

Type a specific filename or function

Continue speaking to explain constraints or context

This hybrid interaction is what makes voice mode genuinely useful instead of gimmicky.

How to use it

Go beyond autocomplete. In this hands-on session, you’ll design reliable AI agents using structured memory, task-isolated sub-agents, and composable skills. See a real bug-fix pipeline in action and learn patterns you can apply with Claude, LangChain, CrewAI, or AutoGen.

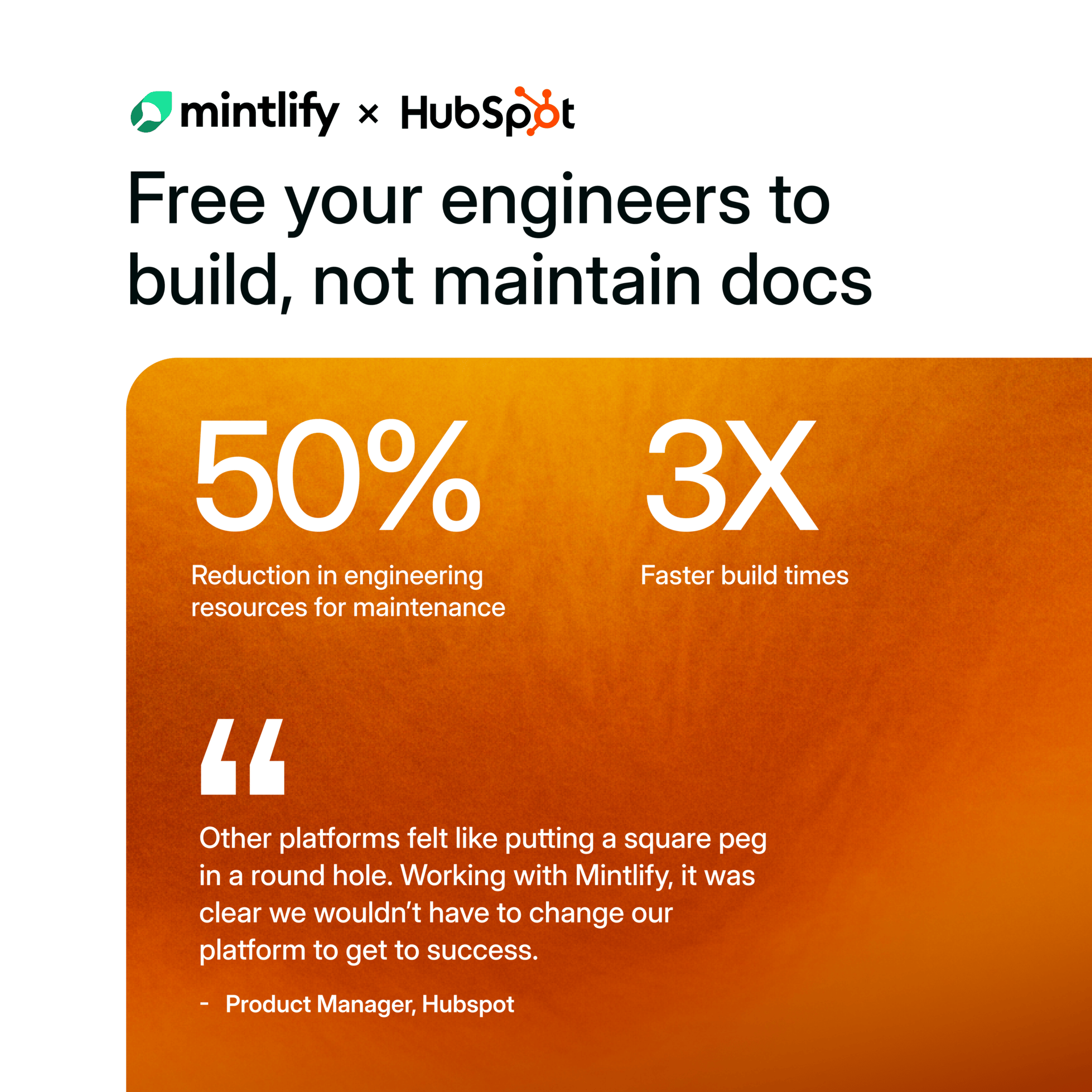

See Why HubSpot Chose Mintlify for Docs

HubSpot switched to Mintlify and saw 3x faster builds with 50% fewer eng resources. Beautiful, AI-native documentation that scales with your product — no custom infrastructure required.